- INSTALL APACHE SPARK FROM UBUNTU INSTALL

- INSTALL APACHE SPARK FROM UBUNTU CODE

- INSTALL APACHE SPARK FROM UBUNTU PASSWORD

- INSTALL APACHE SPARK FROM UBUNTU ZIP

In the following terminal commands, we copied the contents of the unzipped spark folder to a folder named spark.

INSTALL APACHE SPARK FROM UBUNTU ZIP

To unzip the download, open a terminal and run the tar command from the location of the zip file. Before setting up Apache Spark in the PC, unzip the file. This concludes the Word Count program in Jupiter.The download is a zip file. PYSPARK_DRIVER_PYTHON_OPTS=”notebook” pyspark PYSPARK_PYTHON=python3 PYSPARK_DRIVER_PYTHON=ipython3

INSTALL APACHE SPARK FROM UBUNTU CODE

Now open terminal in Ubuntu and paste the below code which redirects you to ipython notebook in web browser. Here we’ll be running a simple word count program in Jupiter. This concludes the Word Count program in Python. Open Terminal in Ubuntu and type the above commands and execute it. Here we’ll be running a simple word count program in Python. This concludes the Word Count program in Spark. In this step, reduceByKey will create a new RDD “count” which will keep the count of every key received from previous RDD “words”. Step 4 : To add the number of occurrences for every key Use the print command to print the results of “words” RDD. For this we use the tokenize function.Ī tokenize function is created which receives some piece of text and returns a list of the words.įlatMap is used to transform an RDD of length N into N collections, then flattens these into a single RDD of results. We now import add which is used as a closure for addition. We will be using the “operator” package here. Here we need to extract all the words in “text” RDD by using the given regular expression. Step 3 : To split the words from “text” RDD The textFile loads the text file Shakespeare. You should specify the absolute path of the input file. We start by creating a Spark RDD called “text”. Step 2 : To c reate RDD from the input file

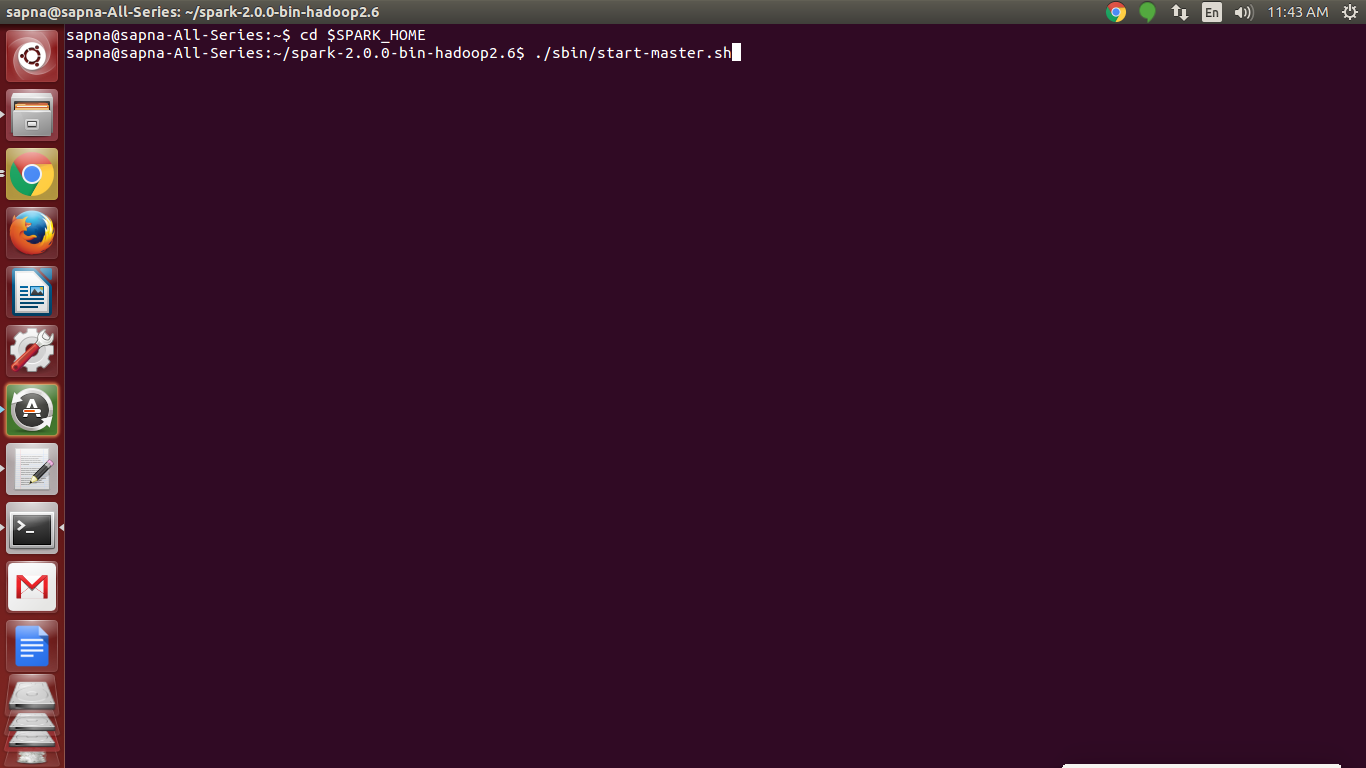

You’ll find something very similar to the screenshot attached below. Open the terminal and type pyspark to get Spark running in Python. These are the necessary steps that need to be carried out: Here we’ll be running a simple word count program in Spark. Type pyspark in the terminal to check if the environment is working fine or not ~pyspark This would probably result in py4j error for which we have to set some additional paths using the following terminal code: export PYTHONPATH=$SPARK_HOME/python/:$PYTHONPATHĮxport PYTHONPATH=$SPARK_HOME/python/lib/:$PYTHONPATH Set the path as: export SPARK_HOME=/home/spark export PATH=$SPARK_HOME/bin:$PATH Step3: In this step we need to set the path. The command for the same is: ~$ mv spark-2.0.1-bin-hadoop2.4 /srv/spark-2.0.1 (ii) Simply copy and paste it in the home location. Step2: Move the unzipped spark into home location on Ubuntu. Step1: Type the below command to unzip Spark: ~$ tar -xzf spark-2.0.1-bin-hadoop2.4.tgz

INSTALL APACHE SPARK FROM UBUNTU INSTALL

Verify the same by running the following commands:ĭownload and install the pre-built version of Spark by clicking here:įollow these Steps to extract and set the path: To check if java is installed on the machine, run the following codes in the terminal prompt. This brings us to our next step which is to get java installed. Spark can be installed only if you have java installed on your machine. From here we’ll be running Ipython notebooks. We have to install iPython notebooks from the given link:īasically we are downloading and installing Anaconda in the virtual ubuntu machine. Now on this virtual ubuntu machine, we need to get Python and Spark running. Once you login your ubuntu machine is all set to run □

INSTALL APACHE SPARK FROM UBUNTU PASSWORD

Step4: Start Ubuntu by entering the username as osboxes and password as Now you are all set to use Linux as the Guest on top of Windows which is the Host machine. Step3: Create the new virtual machine and load the Ubuntu Image file.